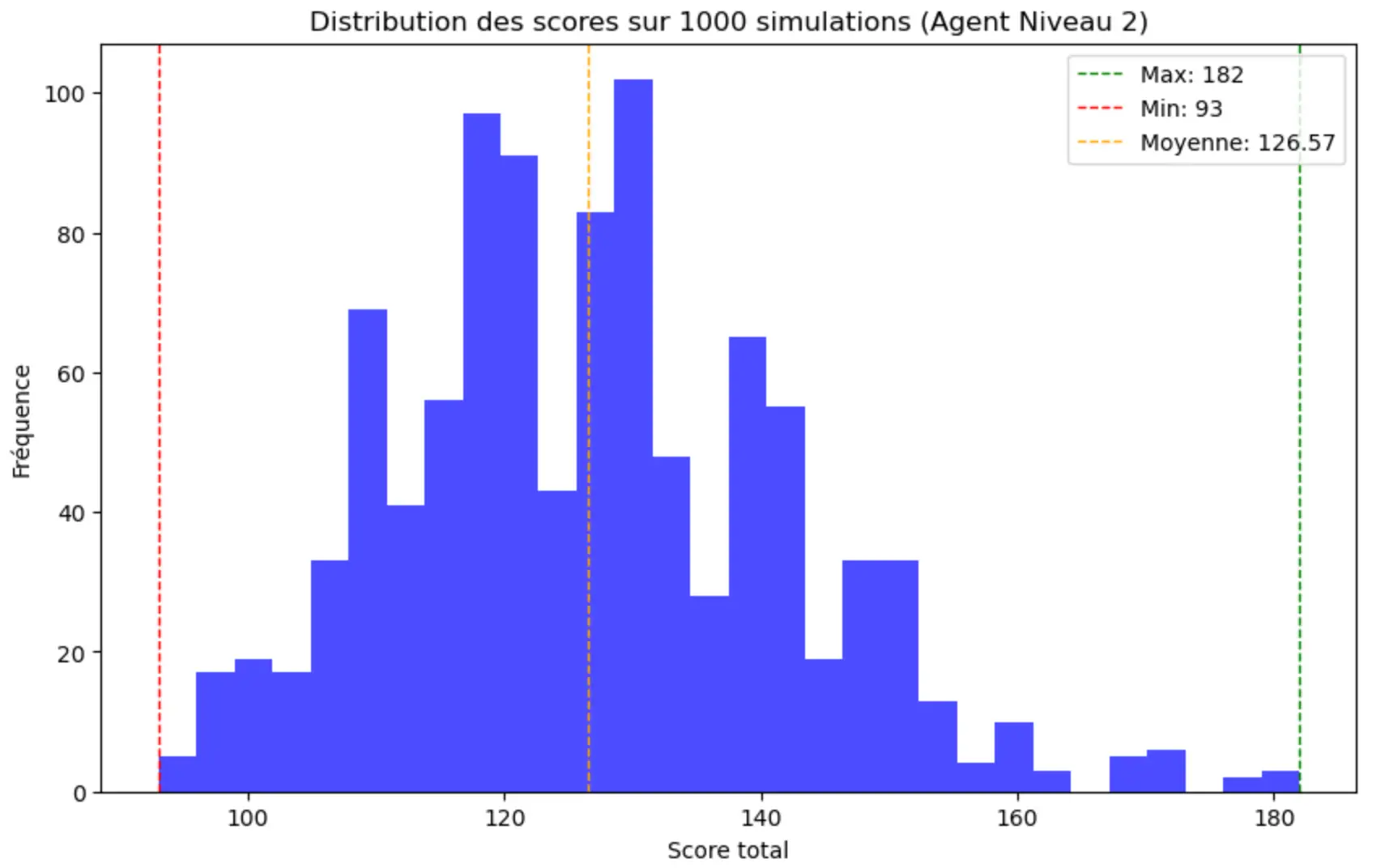

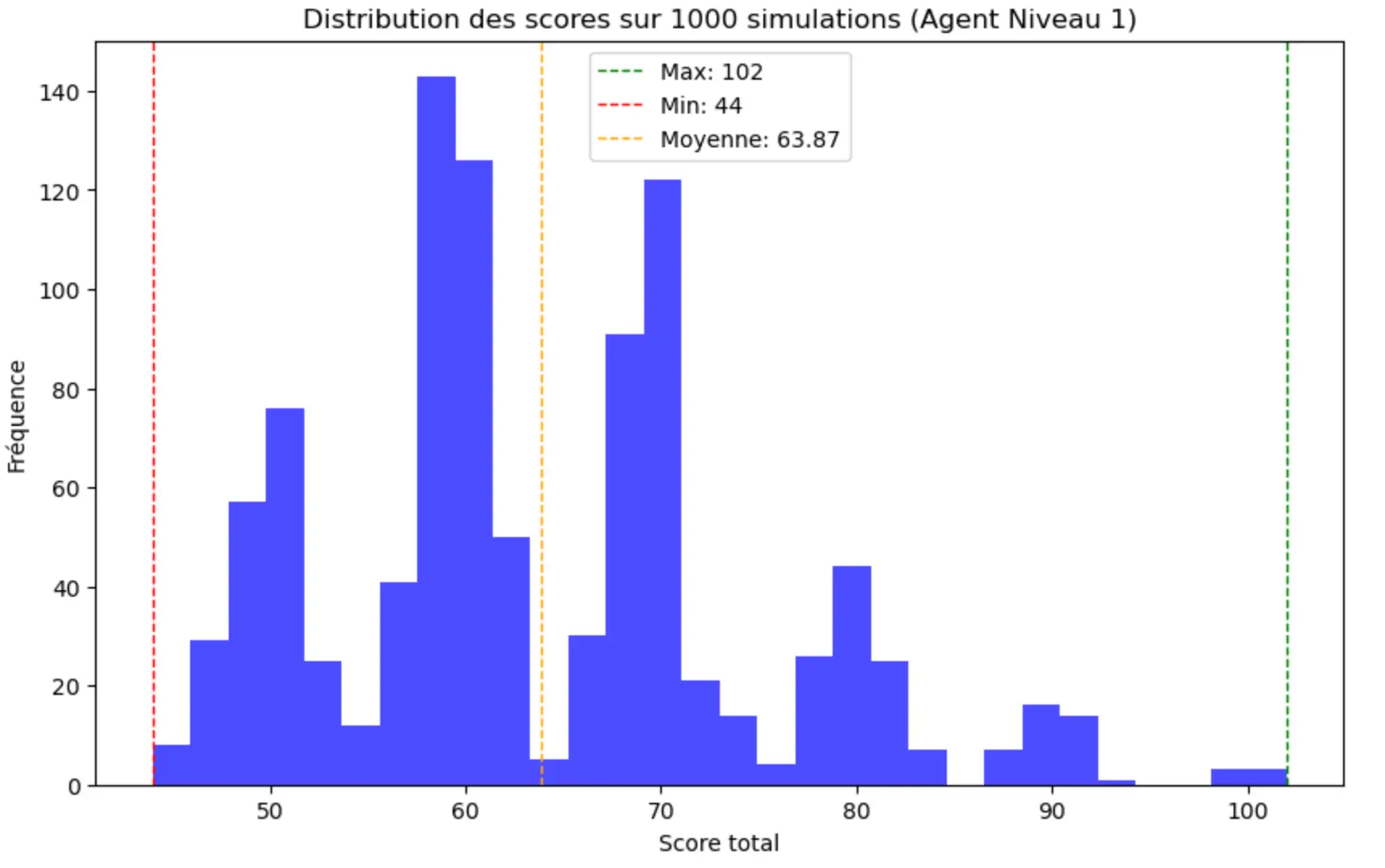

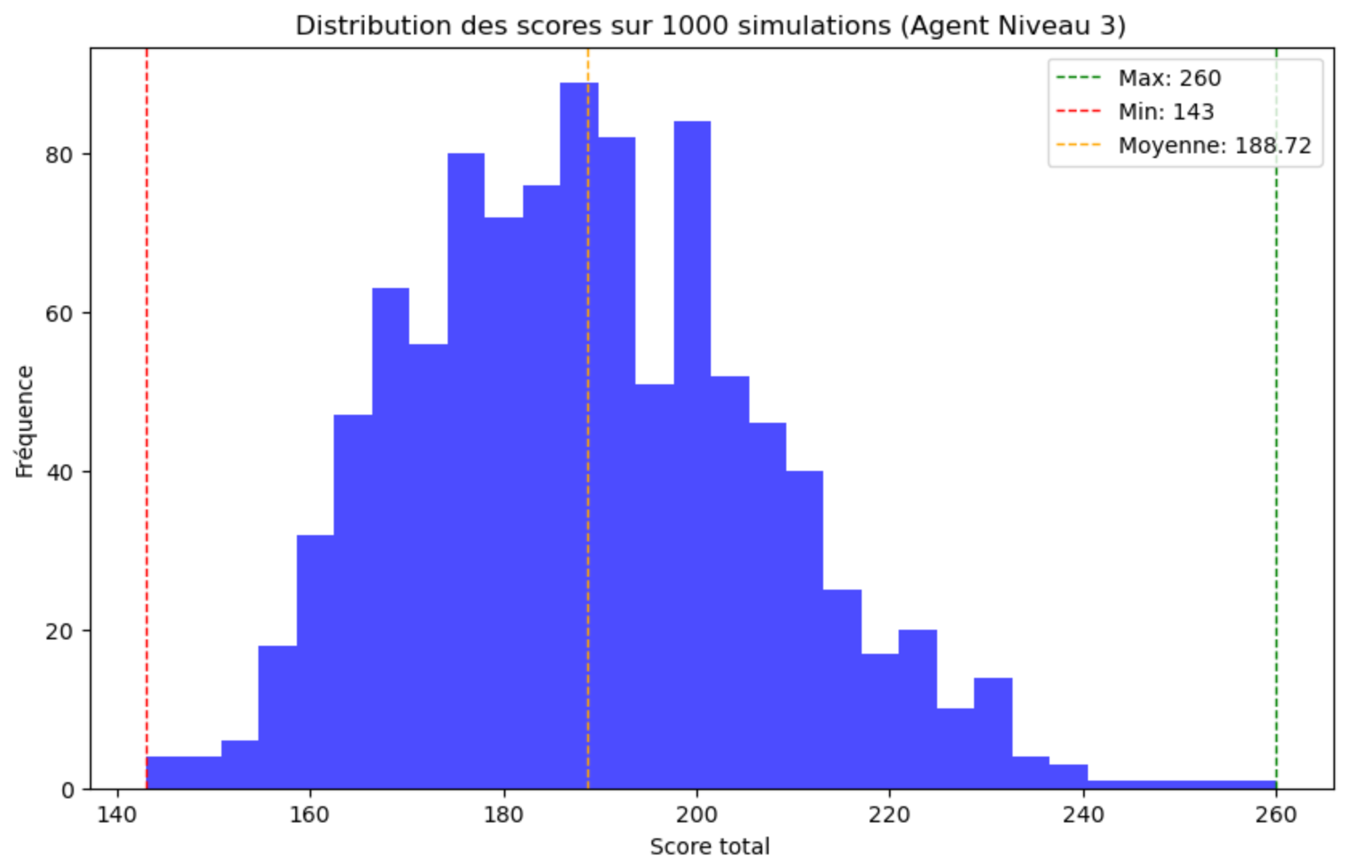

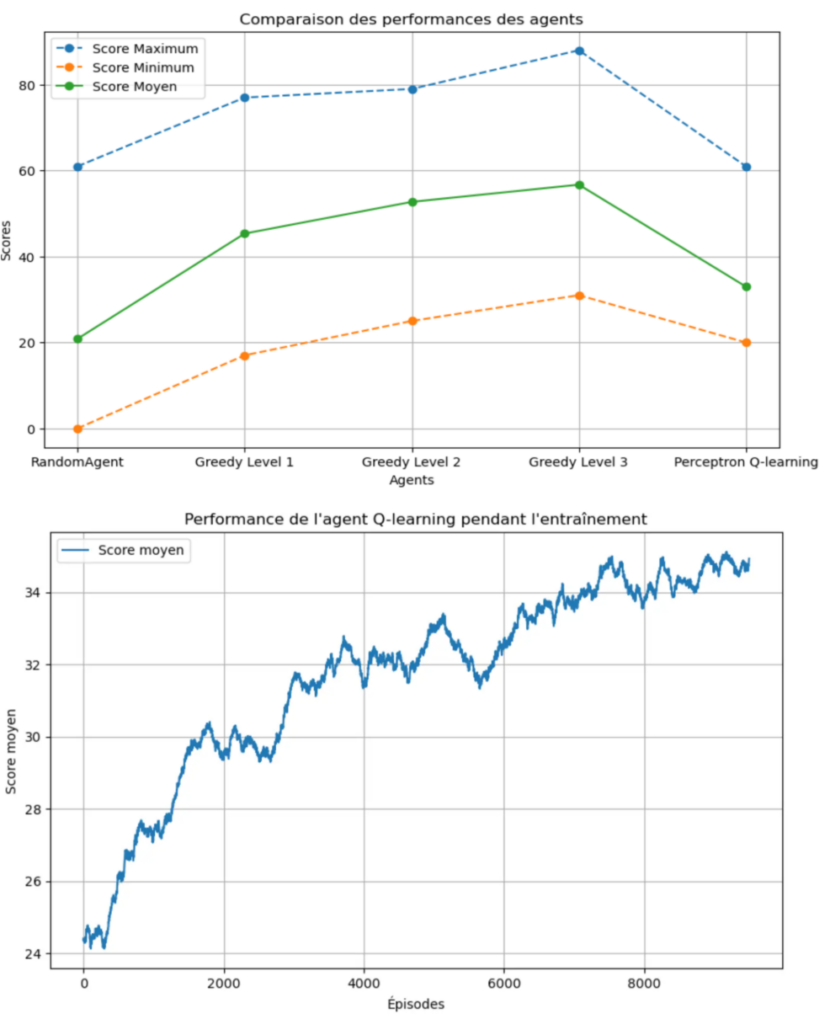

Developed an autonomous AI agent trained to play Yahtzee (YAMS) optimally. The project compares multiple RL strategies, including SARSA, Q-Learning, and Perceptron-based Function Approximation, to navigate a state space of over 1,200 possibilities and maximize long-term scoring across multi-turn episodes.

Code Overview

# Core Q-Learning Logic

def update_q_table(self, state, action, reward, next_state, alpha, gamma):

best_next_action = np.argmax(self.q_table[next_state])

td_target = reward + gamma * self.q_table[next_state][best_next_action]

td_error = td_target - self.q_table[state][action]

self.q_table[state][action] += alpha * td_error

Technologies Used

YAMS Reinforcement Learning Agent

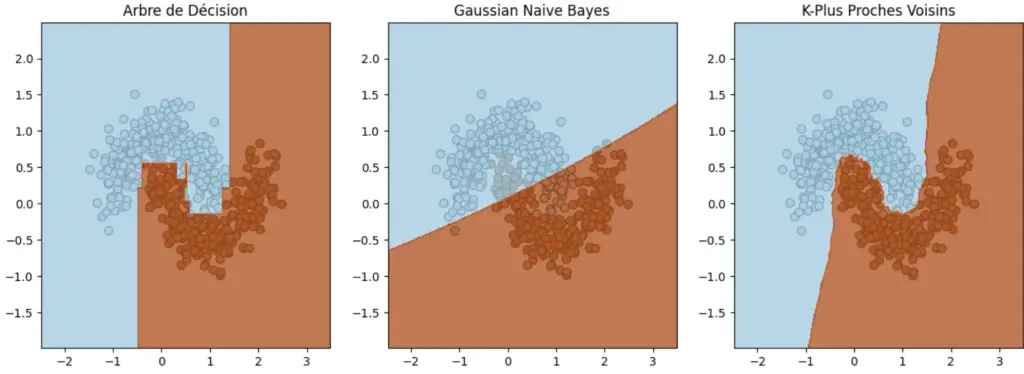

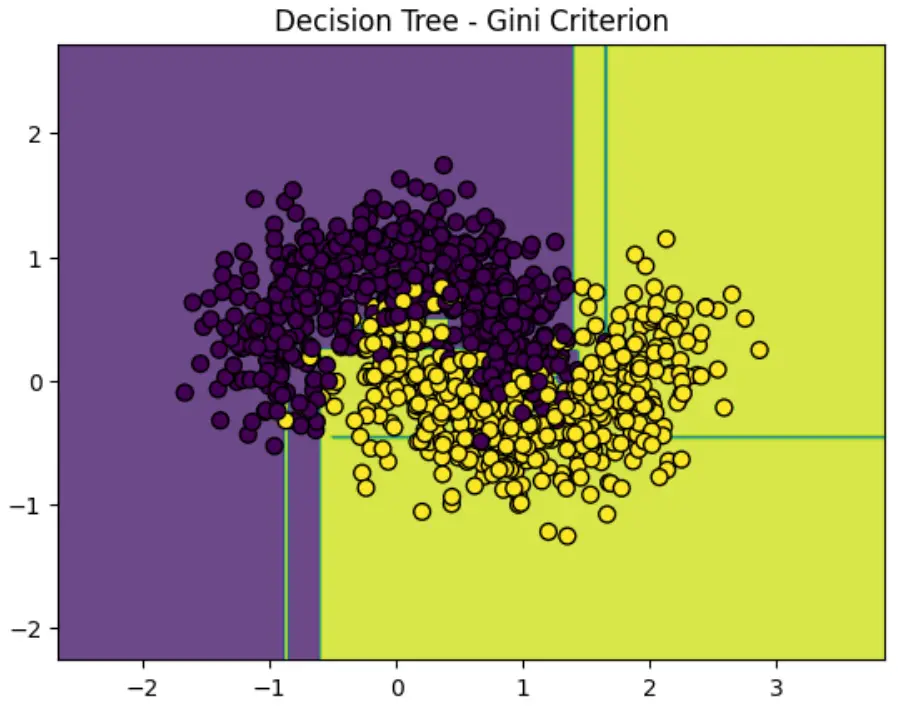

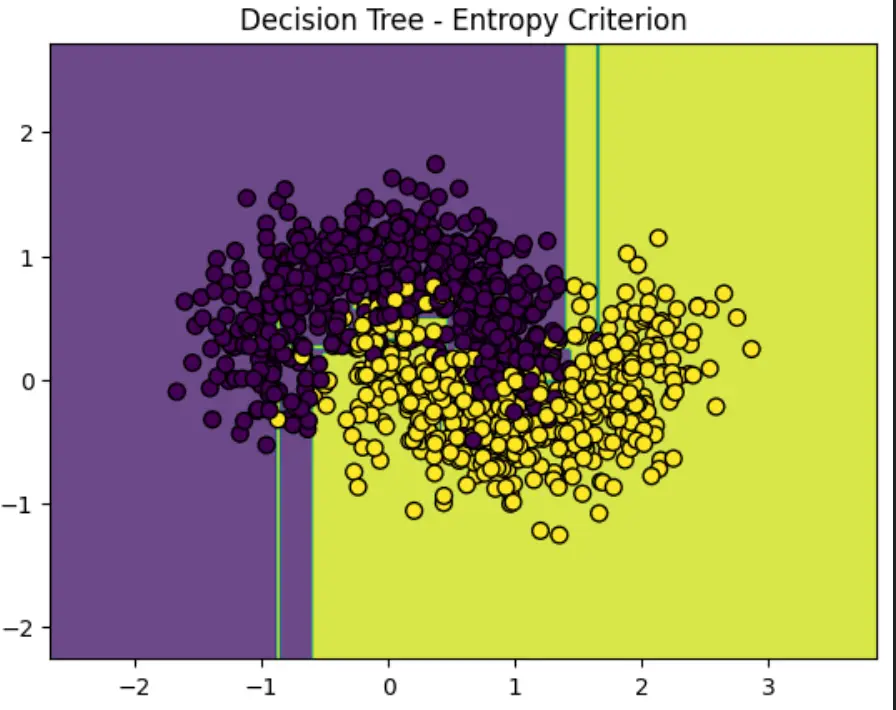

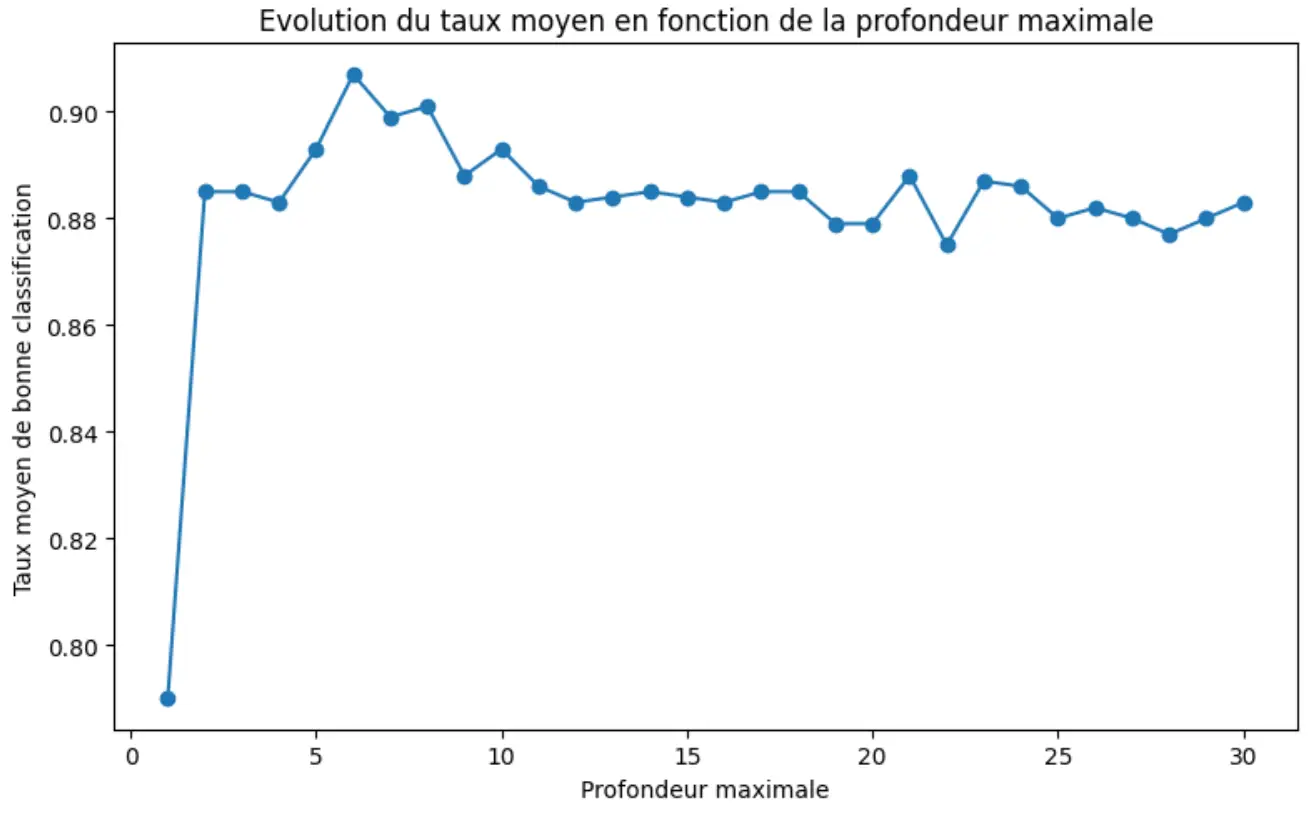

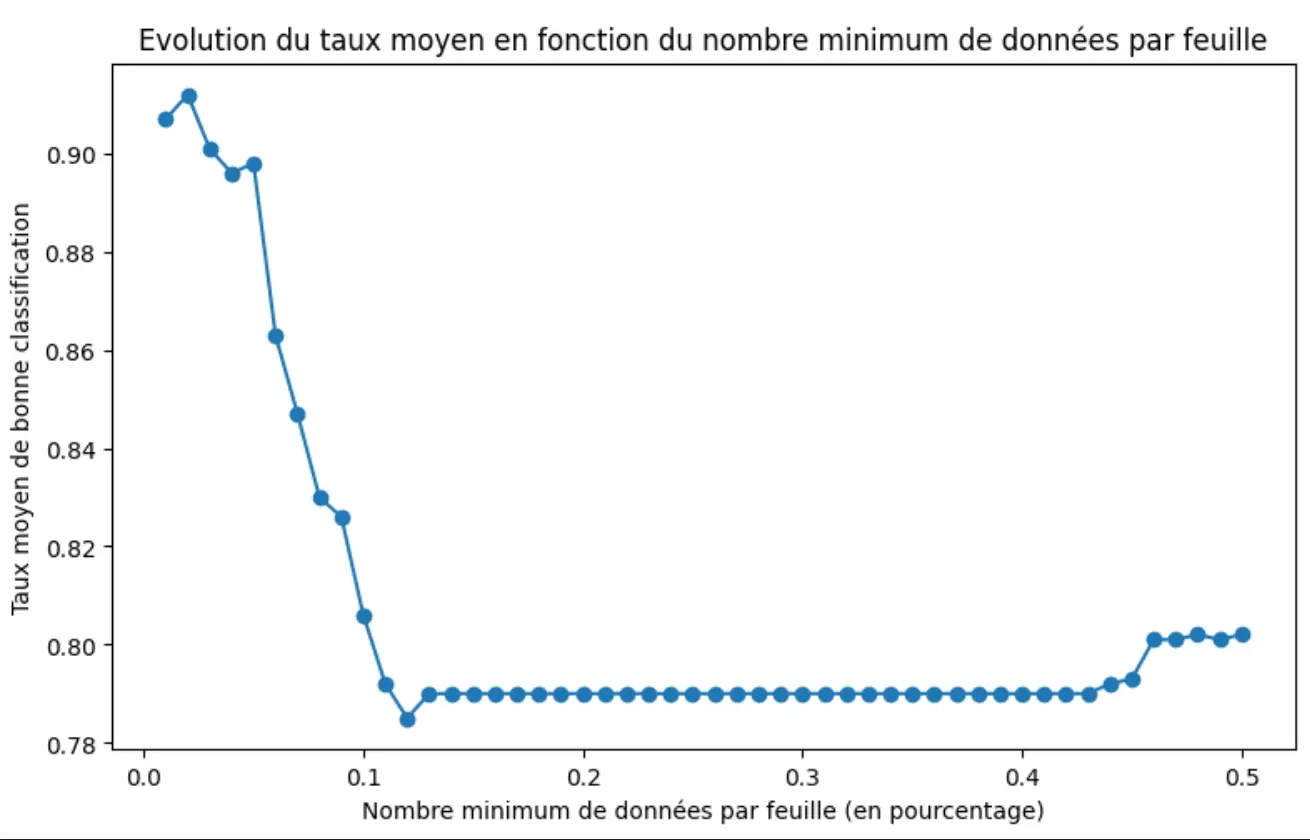

An in-depth exploration of Decision Tree Classifiers, evaluating their performance against Gaussian Naive Bayes and KNN. The study utilizes the Iris and Make_Moons datasets to visualize complex decision boundaries and conducts a rigorous hyper-parameter sweep to mitigate overfitting through depth and leaf-size constraints.

Code Overview

# Mapping complex decision frontiers in 2D space

def plot_decision_frontiers(classifier, X, ax):

h = .02 # grid step

xx, yy = np.meshgrid(np.arange(x_min, x_max, h),

np.arange(y_min, y_max, h))

Z = classifier.predict(np.c_[xx.ravel(), yy.ravel()])

Z = Z.reshape(xx.shape)

ax.contourf(xx, yy, Z, cmap=plt.cm.Paired, alpha=0.8)

ax.scatter(X[:, 0], X[:, 1], c=y, edgecolors='k')

Technologies Used

Decision Trees Optimization & Comparative Benchmarking

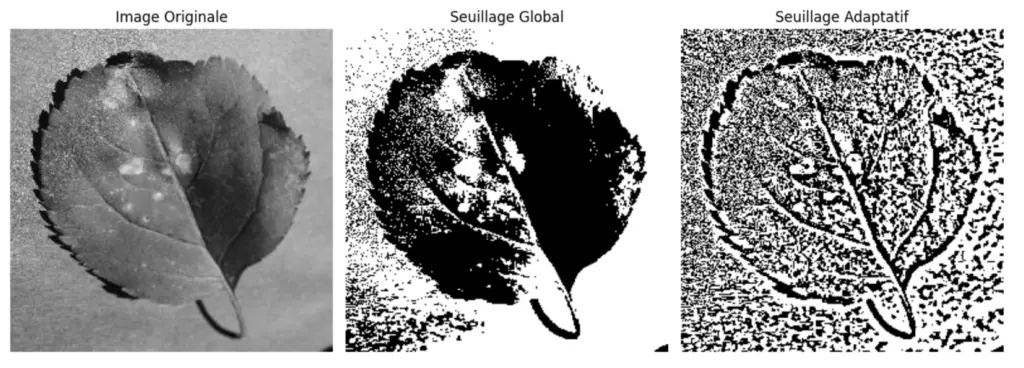

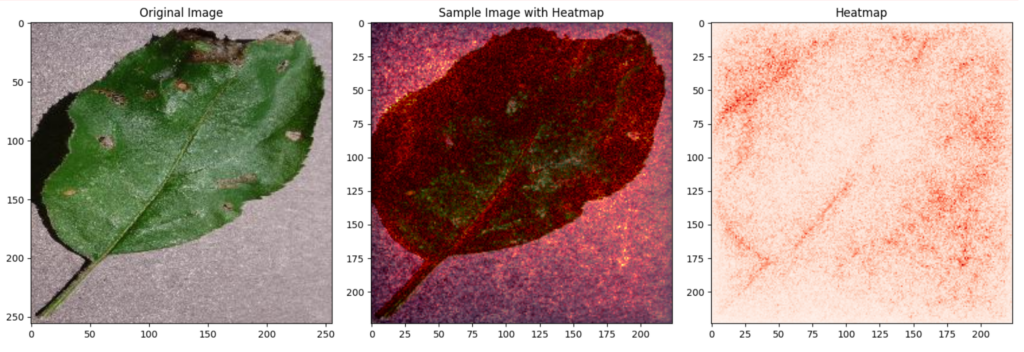

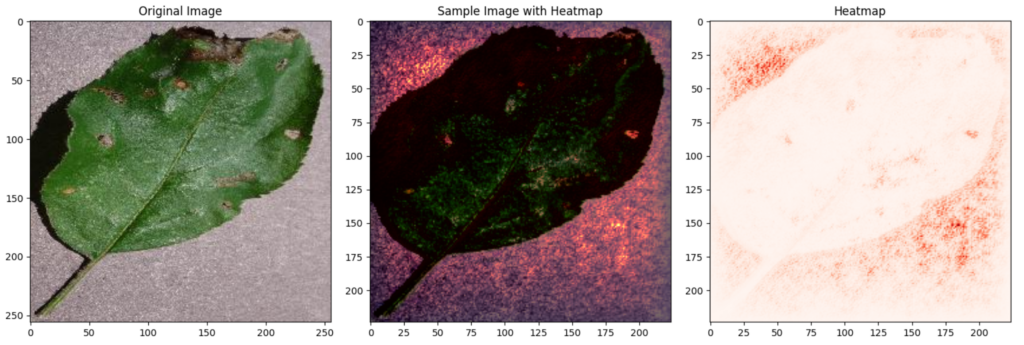

Implemented a diagnostic pipeline to identify Apple Black Rot using VGG-16 deep feature extraction. The project compares Fisher Discriminant Analysis against Difference-of-Means and utilizes Sensitivity Analysis to generate pixel-wise heatmaps, validating the model’s focus on pathological regions.

Code Overview

# Computing pixel importance via backpropagation

def sensitivity_analysis(model, image, device):

image.requires_grad = True

output = model(image)

output = torch.flatten(output, 1)

output.backward()

# Calculate gradient norm for heatmap

gradient = image.grad.data.squeeze().pow(2).sum(0).sqrt()

return gradient.cpu().numpy()

Technologies Used

Plant Disease Recognition & XAI

A comparative study of five fundamental classification algorithms: Minimum Euclidean Distance, Mahalanobis Distance, k-NN (Majority/Unanimity), and Parzen Windows. The project involves mathematical modeling of decision boundaries and performance optimization using 5-Fold Cross-Validation to determine hyper-parameters.

Code Overview

# Calculating distance relative to class covariance

def mahalanobis_distance(self, x, class_label):

mu = self.mean_vectors[class_label]

inv_cov = np.linalg.inv(self.covariance_matrices[class_label])

delta = x - mu

return np.sqrt(delta @ inv_cov @ delta.T)

Technologies Used

Statistical ML Multi-Classifier Benchmarking

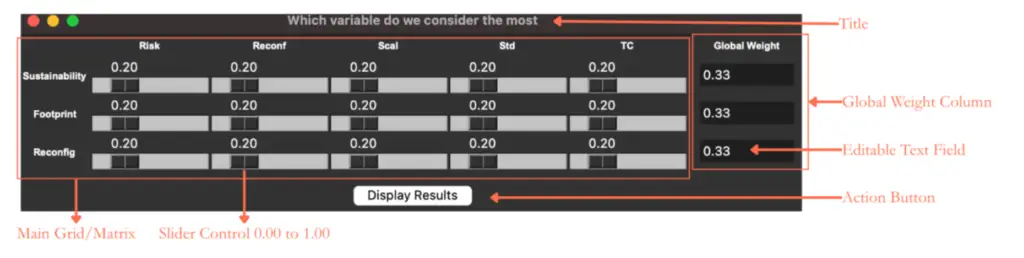

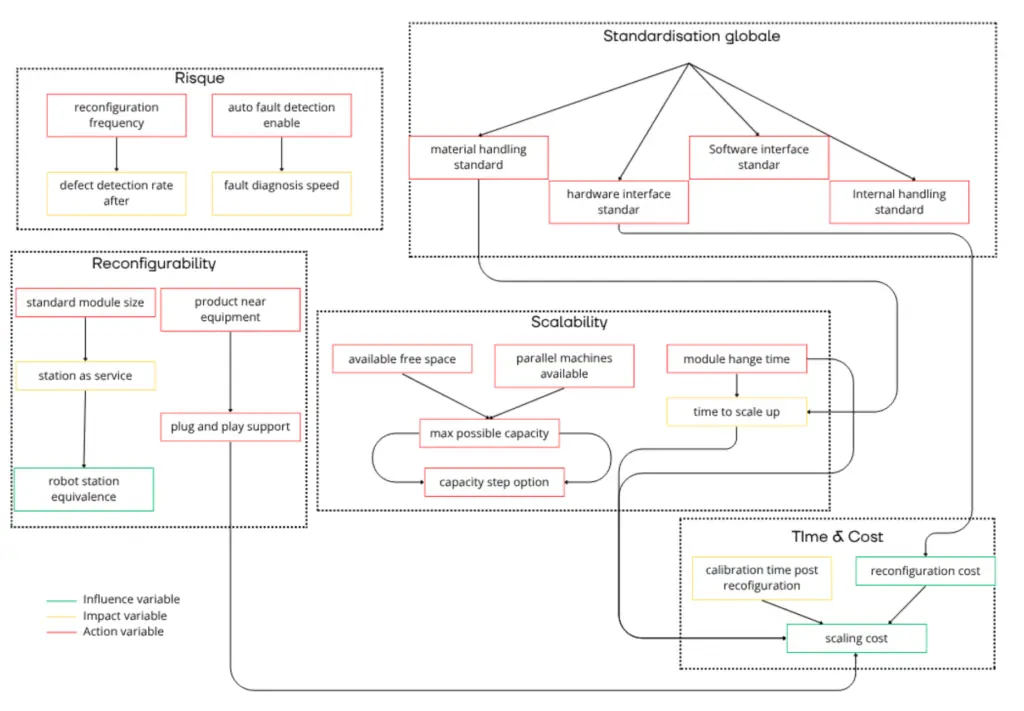

A high-level decision support system developed for ASSAR Industrial Innovation Arena. This project uses Random Forest Regressors and SHAP interpretability to decode the complex relationships between 40+ industrial variables, identifying the primary drivers of Sustainability, Reconfigurability, and Footprint.

Code Overview

# Defining the objective function for industrial performance

def calculate_reward(row):

reconfig = 0.4 * (1 / (1 + 0.5*row['module_reconfiguration_time'] + ...))

sustain = 0.3 * (0.4*row['material_handling_standard'] + ...)

footprint = 0.3 * (1/(1 + 0.0002*row['scaling_cost']) + ...)

return reconfig + sustain + footprint

Technologies Used

Industrial Matrix Variable Importance & Decision Support

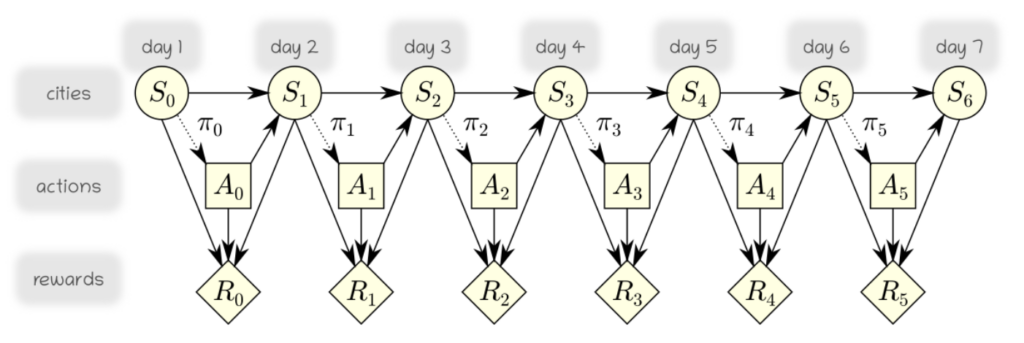

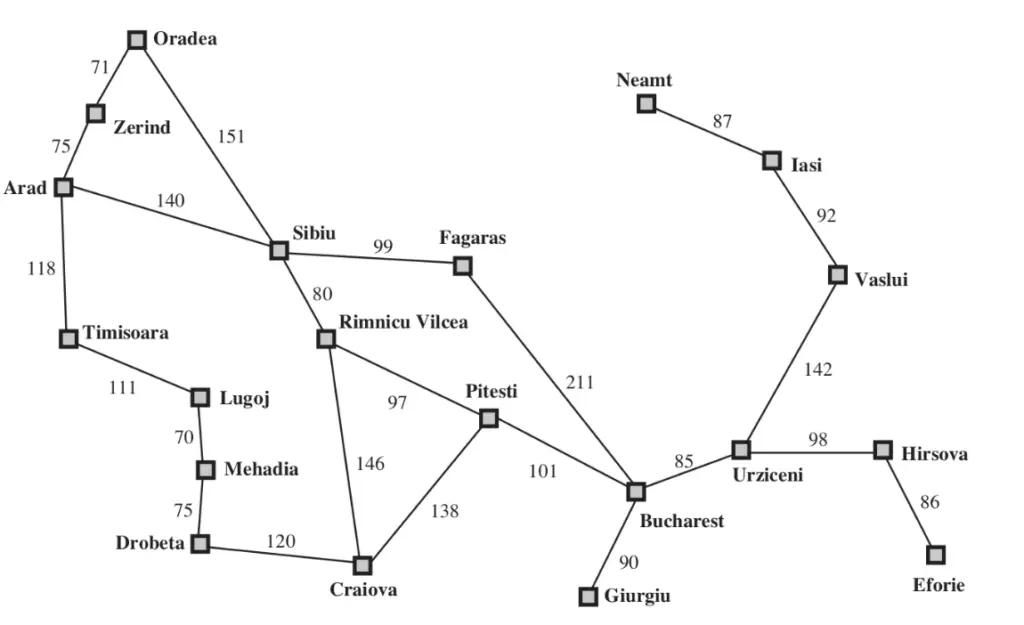

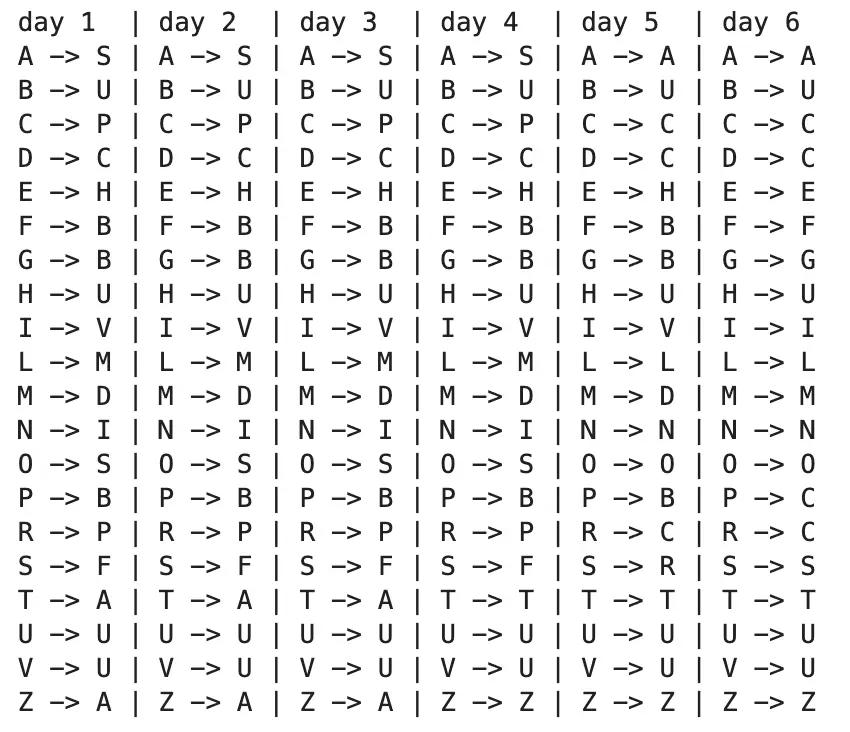

This project models a stochastic path-planning problem using a finite-horizon Markov Decision Process. An agent must reach a target city within a limited number of days while accounting for uncertain action outcomes. The optimal time-dependent policy is computed using backward dynamic programming.

Code Overview

def getPolicy():

P, Rmid, Rlast = buildArrays()

U = np.zeros(N)

for t in reversed(range(H)):

R = Rmid + (Rlast if t == H-1 else 0)

Q = (P * (U + R)).sum(axis=-1)

U = Q.max(axis=-1)

return Q.argmax(axis=-1)

Technologies Used

Stochastic Path Planning with Markov Decision Processes

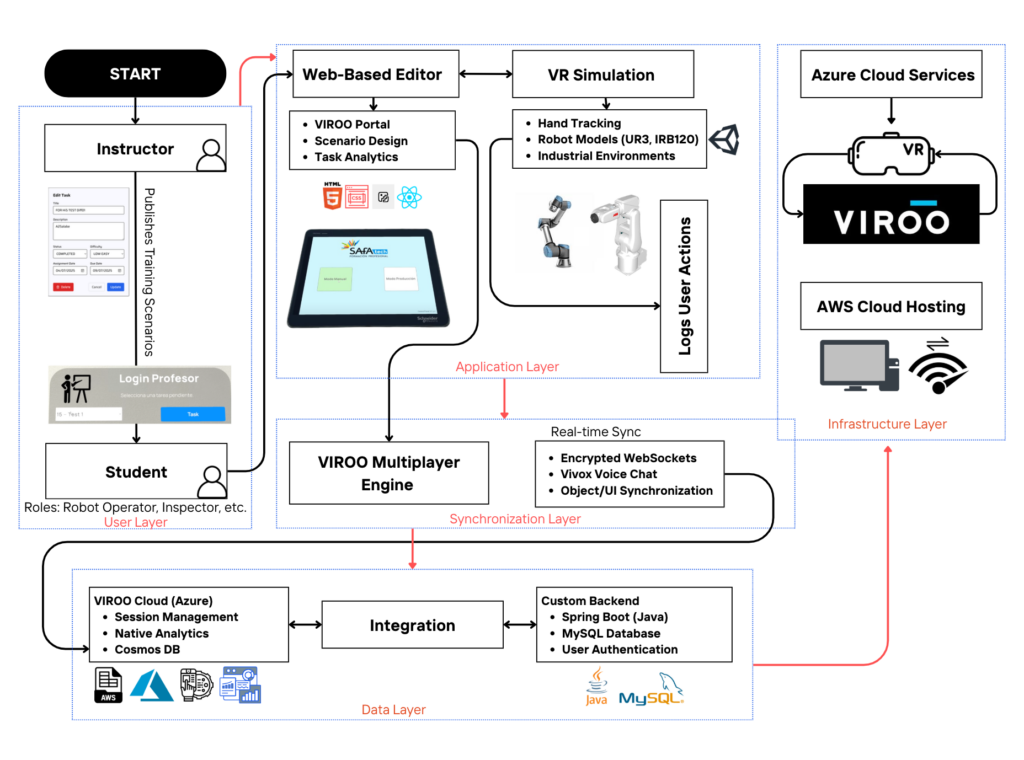

In collaboration with the University of Skövde, I participated in the development and evaluation of X-RAPT, a multi-user XR platform designed to bridge the gap between virtual simulation and industrial robot programming. This project leverages a 5-layer architecture—integrating Unity-based Digital Twins of UR3 and ABB IRB120 robots with a Java Spring Boot and AWS backend to facilitate collaborative training between students and instructors. My role focused on investigating the technical feasibility of cross-platform VR/PC synchronization and conducting usability testing.

Technologies Used

X-RAPT: XR-Based Robot Programming Training

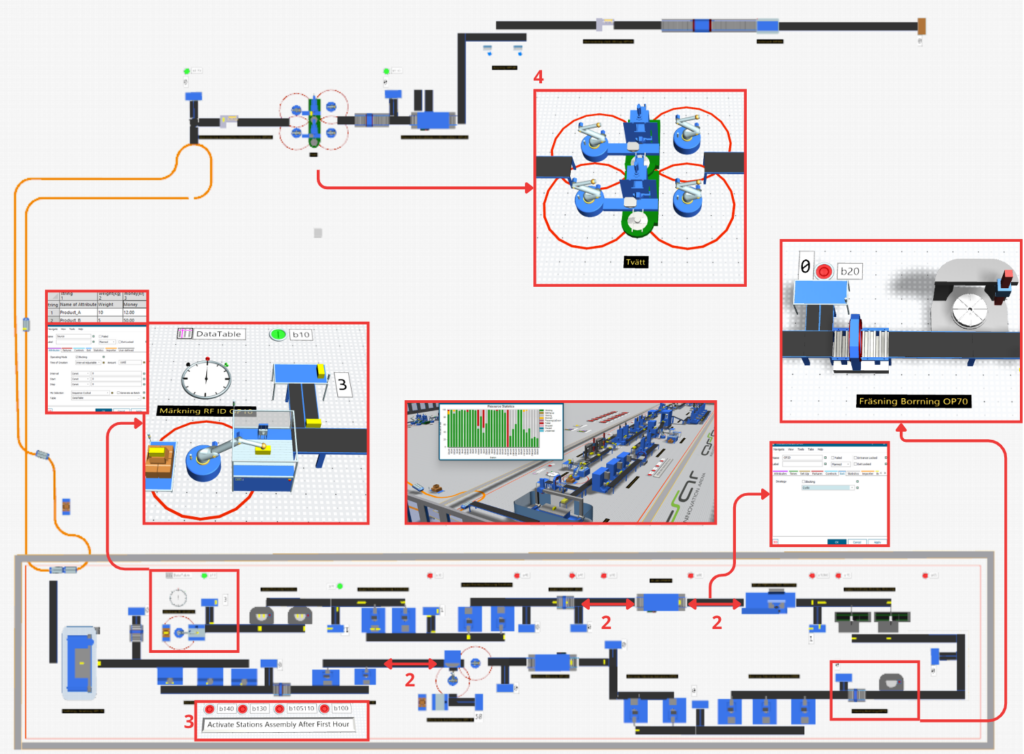

A cutting-edge 3D demonstrator designed to bring reconfigurable manufacturing system concepts to life at the ASSAR Industrial Innovation Arena. Focused on bridging the gap between Plant Simulation models and immersive Virtual Reality environments, allowing stakeholders to virtually walk through a digitally reconfigurable factory.

The project involves

Technologies Used

REFUSE: 3D Demonstrator

Developed a surfing simulator that integrates Virtual Reality (VR) with real-time Robotics to replicate the physical sensation of riding ocean waves. By leveraging the Unity 3D engine and Meta Quest headsets, the project creates an immersive visual environment driven by real-world data.

Technologies Used

Surfing Simulator

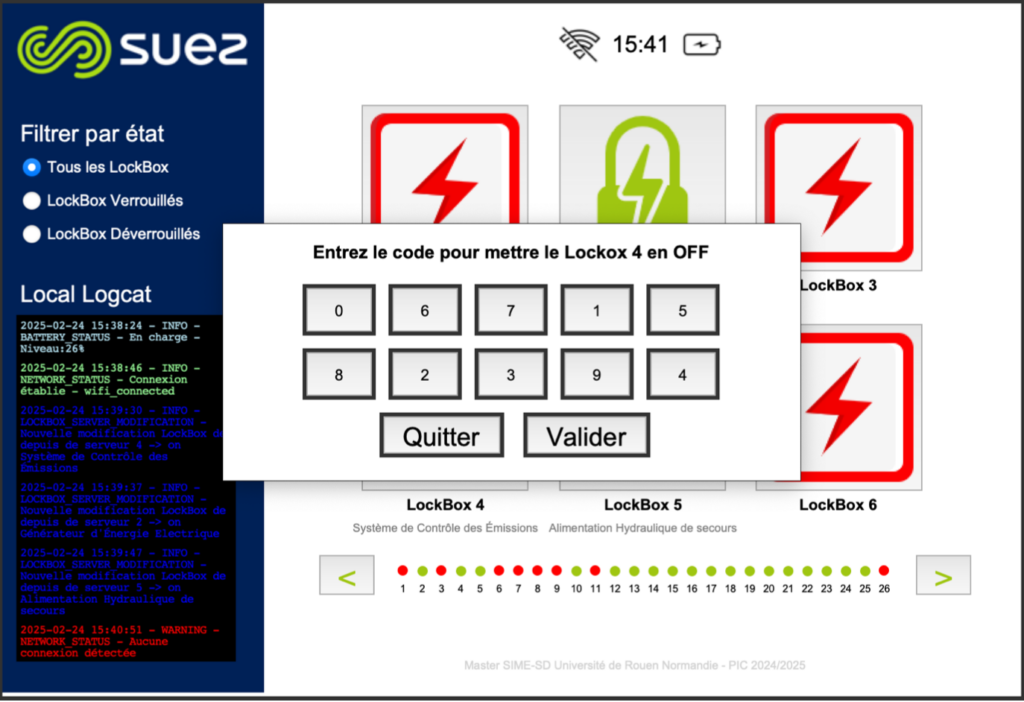

Developed an intelligent, connected lockout-tagout (LOTO) station designed to manage 26 electronic locks for industrial safety. The system ensures that maintenance operations are performed securely by controlling access to equipment through a centralized digital interface. The system is built on a Raspberry Pi 4/5 architecture and is engineered for extreme resilience. In the event of a network failure, the station automatically switches to a Local Mode, utilizing a local CSV-based database and a Tkinter-based HMI to ensure safety protocols are never interrupted.

Technologies Used

Resilient Intelligent Safety System for Industrial Lockout-Tagout

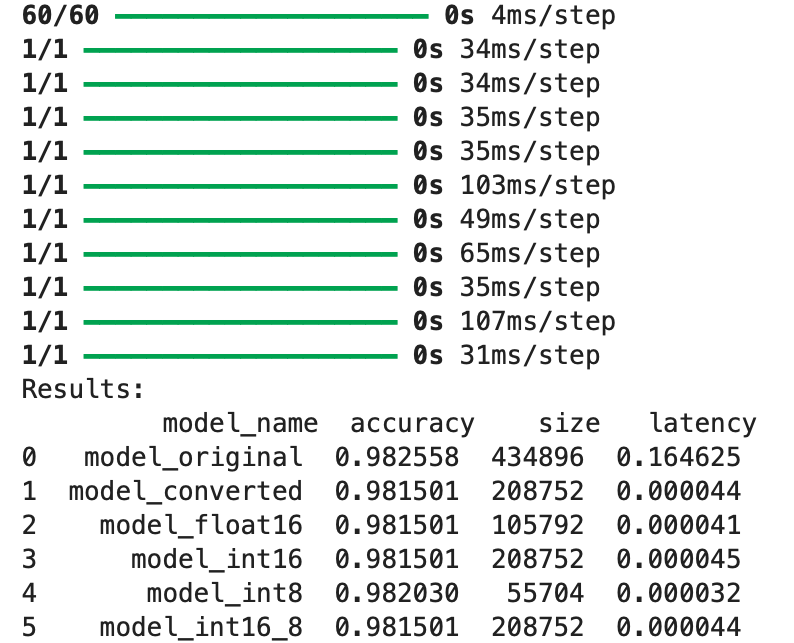

Developed as part of the “Specialized Architectures” module at the University of Rouen Normandy, this project focuses on the end-to-end pipeline of creating, training, and optimizing a neural network for embedded deployment.

The project addresses the challenge of deploying a 1024-feature classification model onto resource-constrained devices. By implementing Post-Training Quantization (PTQ) using TensorFlow Lite, I successfully compressed the model weights and activations from 32-bit floating points to 8-bit integers.

Technologies Used

Deep Learning Model Compression via TFLite Quantization

This project serves as an interactive bridge between instructors and students. The platform allows professors to upload presentations (PPT/PDF), which are automatically processed into web-optimized images using a Python-based conversion engine.

The core of the system is a Node.js/Socket.io server that manages real-time slide synchronization, allowing a “Host” to control the viewing experience of hundreds of connected “Students” simultaneously. Beyond simple viewing, the system captures granular engagement data including time spent on each slide, “like/dislike” reactions, and live comments all of which are persisted in a MongoDB Atlas database for post-session analysis.

Technologies Used

Real-Time Interactive Presentation & Engagement Analytics Platform

Developed as a digital hub for the GreenGENIUM Composting Initiative, this project supports a student-driven movement across the INGENIUM European University alliance. The platform serves as the central coordination point for transforming organic waste from university cafeterias into nutrient-rich compost, facilitating a circular economy transition at TUIASI (Romania), University of Rouen Normandy (France), and MTU (Ireland).

Technologies Used

Cross-Campus Circular Economy & Sustainability Platform

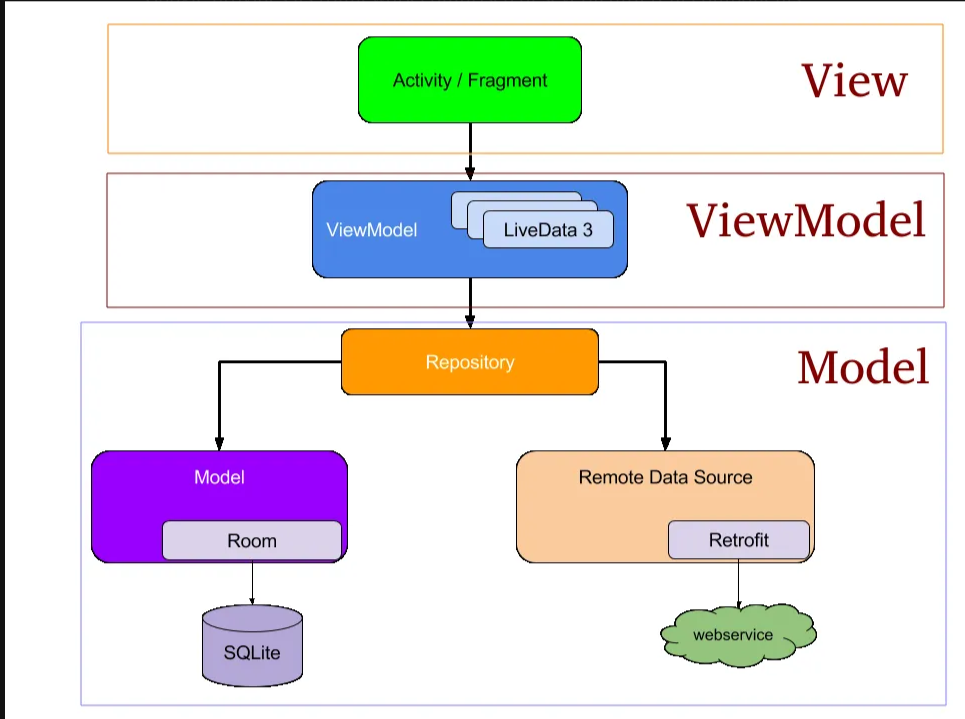

// Retrofit interface for API calls

interface ApiService {

@GET(\"/data\")

suspend fun fetchData(): List<DataItem>

}

// Room entity for local storage

@Entity

data class DataItem(

@PrimaryKey val id: Int,

val name: String,

val value: String

)

Technologies Used

Mobile Data Management App